"Dumb" is a word you don't want to hear a writer say, especially when talking about a state-of-the-art AI like ChatGPT. But I feel at home with mean sharp little words like Punk, Cheap, and Dumb; they're much easier to pin down than Good, Happiness, or Love.

ChatGPT, like Nirvana's best song, is dumb.

It's a parlor trick, a party game, one of those things you show off at a dinner party to impress people with your intelligence.

As was the worry with "deepfake technology," once it's revealed to the world, people can easily see through it and find the limits.

Here is ChatGPT's Achilles' heel, so to speak:

ChatGPT is Fooled by Easy Patterns

ChatGPT uses machine learning and conversations from human AI trainers to generate responses, but it has been trained on such a narrow data set that its answers are limited. According to developers, ChatGPT can only respond to conversations with lofty verbose answers because it was trained that way.

Long answers also sound the most impressive... to those who are easily tricked.

It's like pulling a rabbit out of a hat or punching yourself square in the balls to impress your friends – it looks cool, but you know what the trick is in the end.

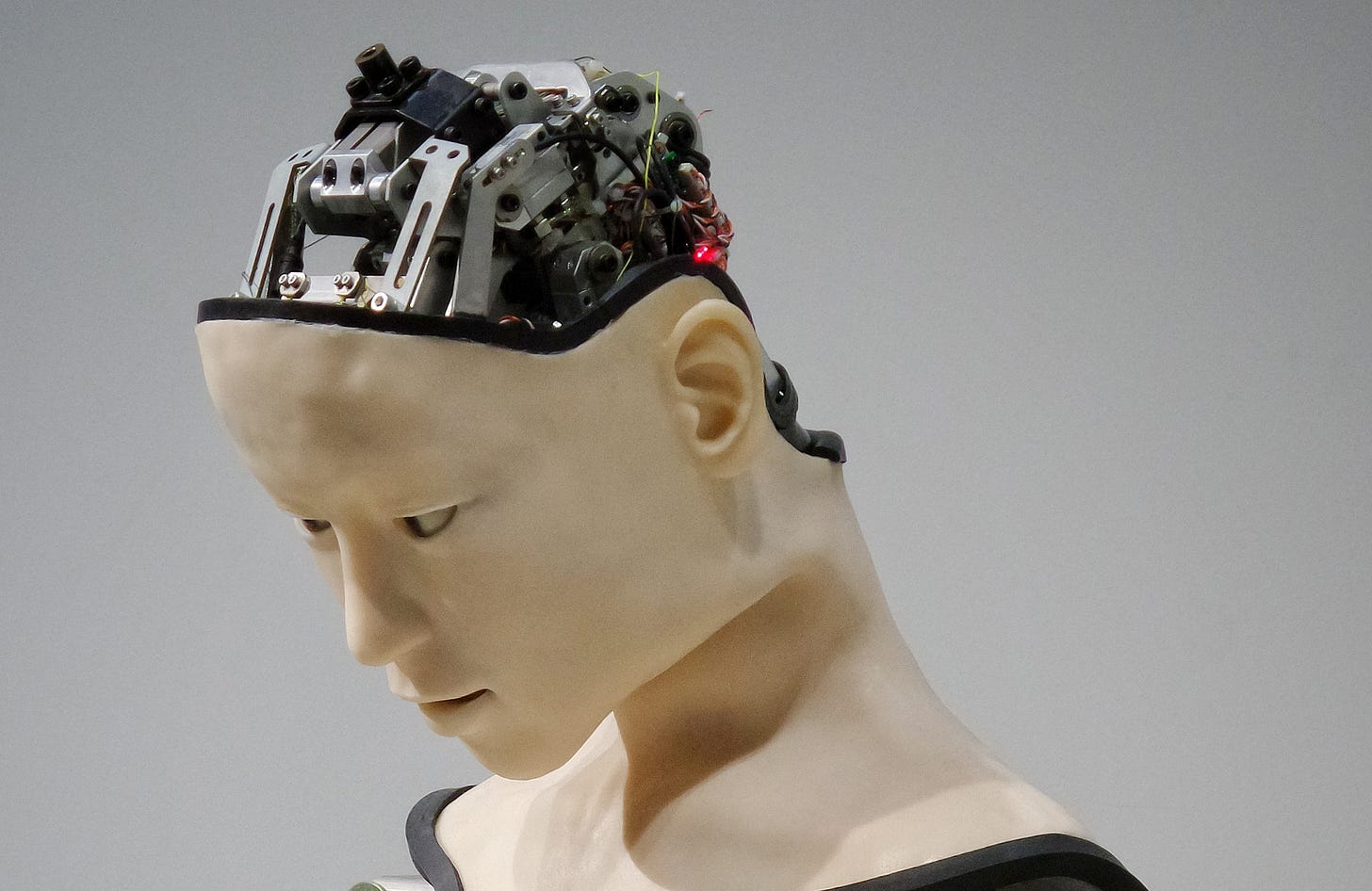

There's no real thought; no real understanding. ChatGPT is pattern recognition; Google Search with a touch of heart. It simply is not alive.

Even if we put up layers upon layers of abstraction it is still a computer program interpreting and executing instructions. If it fundamentally lacks the concept of adaptation, it is nothing better than a new form of Google, but without hyperlinks or actual sources. StackOverflow actually banned ChatGPT, saying: “Overall, because the average rate of getting correct answers from ChatGPT is too low, the posting of answers created by ChatGPT is substantially harmful to our site and to users who are asking or looking for correct answers."

But hey, it can also fool your college professor into giving you a C+ on a paper... or produce nothing at all:

ChatGPT is a step in the right direction. It's like a baby learning to crawl—it can only go so far, but it's still amazing to see how far it has come.

It's also a major disruptor of the coding industry.

As my friend Kelly Parks said, "Why not ask it to write a python program that connects to a stock price API and writes current stock prices to a spreadsheet?"

I know another friend who uses ChatGPT to run a Star Trek-themed text adventure which lets him roleplay as a member of the bridge crew. He says it often does a very good job of emulating the characters' personalities and that chatting with them is more stimulating than chatting with most real people. My friends don't go outside much.

The Intelligent Internet is All Hype

“Artificial intelligence is about to be pushed out to the edge. So every person, country, and culture has their own constantly updated models, so you’ll have your own model of all your knowledge across modalities. And you can run it on your local hardware without access to the internet.”

— Emad Mostaque, Founder of Stability AI

"The intelligent internet" is 2023's Web 3.

It's a hype word like "metaverse" or "augmented reality." It's all marketing fluff to make new AI technologies seem revolutionary.

The latest comes from Emad Mostaque, founder of Stability AI and the ring leader of AI art and writing. According to Mostaque, AI will replace low-skilled jobs and many high-skilled professions too.

Its bullshit. Mostaque is probably a nice guy but he markets AI like a snake-oil salesman peddling magical elixirs. Go sign up for Stability AI's Stable Diffusion and use the image generator. It doesn’t match the hype. Images are cartoonish, poor quality and if you have to have PhotoShop skills to tweak the image..well, what the hell do you need the AI for?

Additionally, listen to Mostaque in any interview — if it takes a Ph.D. to understand the real, direct use cases of AI, I'm not sure this is the next greatest thing since sliced bread.

As was the case with ChatGPT, however, I'm not against AI art.

I'm no artist but I believe knowledge can be found in anyone and in anything; I make no discrimination if I learn from AI. I also understand I'm not a machine and I can't churn out novels or multiple pieces of artwork in mere seconds.

There's something here, but it sickens me that AI's biggest advocates are mostly businessmen saying AI will make people more efficient, when really, it's about making money.

AI: What's Next?

AI is a tool like anything else: it is just like Grammarly and spell check, Unsplash, Canva, WordPress, computers, cellphones, or unlimited redos.

As I wrote earlier this month, if we ban anything, we should ban everything but pencil, paper and physical tools.

You can't stop the future. But the future is bad. And not the cool Ex Machina and Terminator singularity kind of bad. It's Troll 2 bad. Ed Wood bad.

The current iteration of AI is like locking a person in a box, complete sensory deprivation, and wanting them to draw the Mona Lisa from memory.

It's half-baked.

But keep acting like its the next big thing.

Who am I to rain on the party?